Budget Cutting for the U.S. AI Safety Institute Due to Policy Reversal

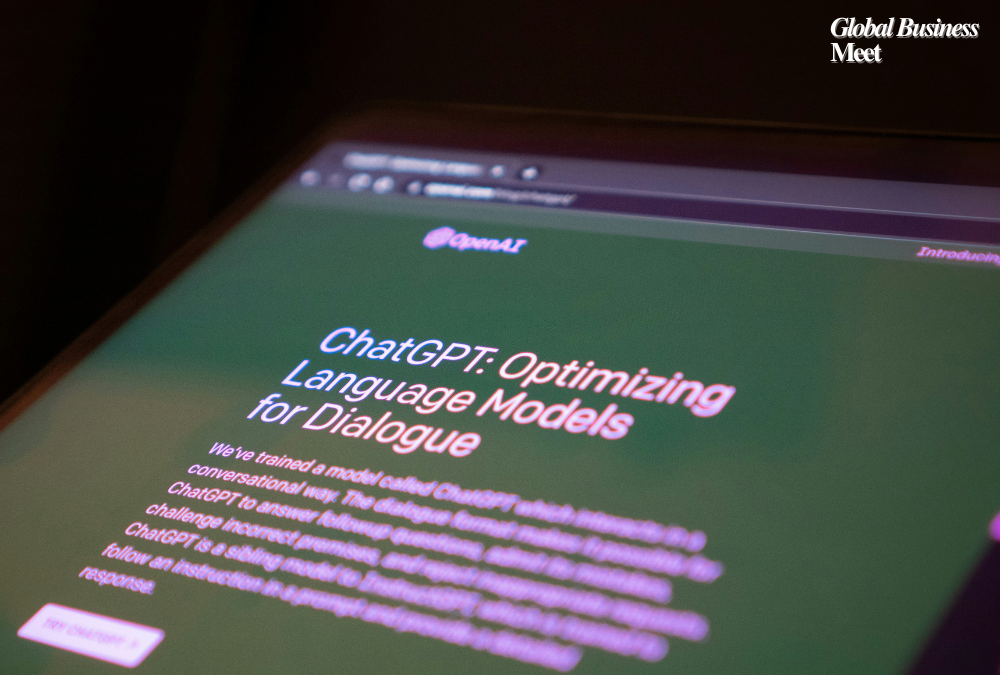

Due to new policy reversals, the U.S. AI Safety Institute (AISI) set up under the National Institute of Standards and Technology (NIST) by an executive order signed in October 2023 by the then-U.S. president Joe Biden is on its way to massive cuts in budget along with a huge layoff scenario. The AI safety executive order was revoked by the current administration, led by President Donald Trump, who described it as a “barrier to American leadership in artificial intelligence.” The action has raised fears about the nation’s future laws on AI safety.

Decreased Funding and Layoffs

NIST, which is in charge of AISI, has seen large budget cuts as a result of a larger deregulatory movement that includes the reversal of Biden’s AI safety regulations. Up to 500 employees, including researchers and AI safety specialists, could be let go, according to reports. The government’s capacity to study, test, and create policies for the responsible and safe application of AI may be seriously hampered by these cuts.

AISI originally came together to assess the risks of AI, create best practices for using it, and coordinate with big businesses to have safety measures. But the deregulation agenda of the Trump administration is slashing spending on regulating AI, and governance of AI remains mainly in private hands.

Influence of the Tech Sector

Experts link the administration’s decision to robust economic support from the tech community, most notably by Elon Musk, who donated Trump’s campaign a total of $300 million. The Department of Government Efficiency (DOGE) that is headed by Musk is working hard to deregulate some of the industries, such as artificial intelligence. The idea of relaxation of AI regulation to favor large companies has also been cemented by the role played by the department in the dismissal of federal cases against tech giants like SpaceX and Coinbase.

AI Policy Advocates’ Fears

AI policy advocates, as well as civil society actors, have denounced the possible dissolution of AISI. Laying off several hundred AI researchers, from the perspective of the Center for AI Policy (CAIP), would have a huge impact on the United States’ capacity to cope with dangers facing AI, such as bias in AI models, disinformation, deepfakes, and prospective security dangers. Although AI is developing incredibly fast and being employed more frequently in vital industries like national defense, medicine, and finance, experts believe research on AI safety is most critical.

Without government regulation, AI research might be driven by profit rather than public safety, and pose ethical and security concerns, according to AI experts. They also caution that other countries may set global standards if the United States does not take the lead on AI security.

Global AI Safety Initiatives

The rest are heading in the opposite direction with the US itself performing an about-turn of its AI safety policy. Supported with £100 million investment, even the UK initiated its own AI Safety Institute (AISI) to carry out thorough AI risk assessments and capability testing. The UK policy focuses on finding out AI threats and establishing robust thresholds to cut off dangers.

The divergence between the rest of the world and the U.S. is a reflection of the growing divergence in AI control approaches. Europe and the UK are increasing their controls to ensure that AI development is morally and safely sound, while the United States is lowering its controls on AI safety.

Future Consequences

No one knows what these policy changes will ultimately do. The United States risks losing its voice in global AI standards if it continues to relax its own rules regarding AI safety. Or uncontrolled AI development could jeopardize society and security if there isn’t robust AI safety regulation.

Experts foresee the United States having to rethink its strategy for AI regulation as the technology evolves and becomes increasingly ubiquitous in everyday life. Whether the AI Safety Institute will survive these budget reductions or be revived under a new administration is uncertain. The current trajectory, however, suggests a shift away from government control and toward industry-driven AI development.