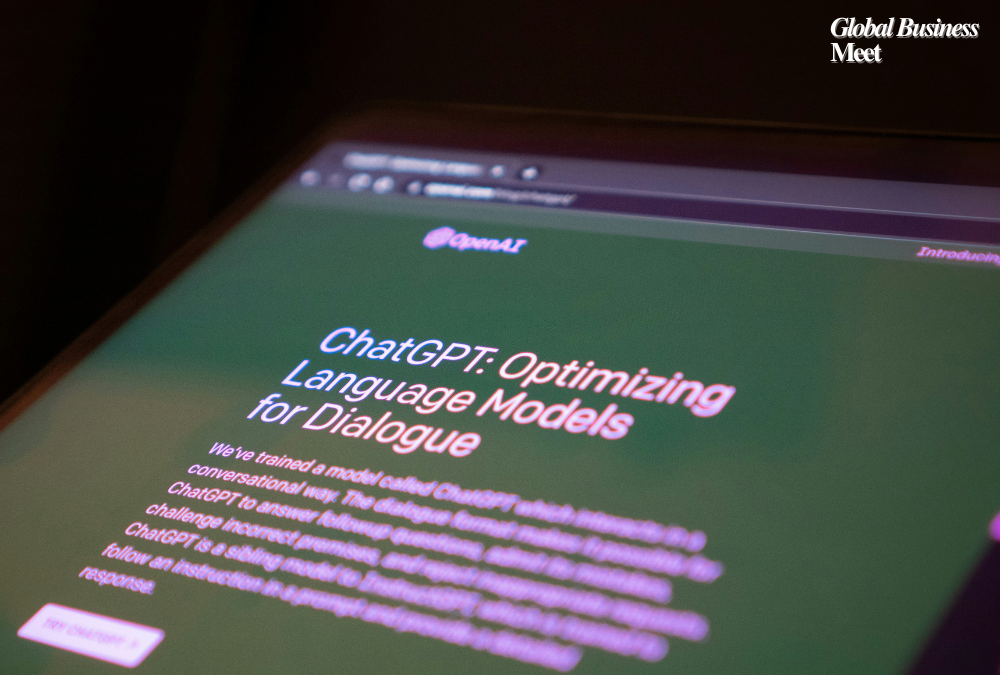

Alphabet, Google’s parent company, has made a significant policy shift by revising its artificial intelligence (AI) guidelines and removing previous promises that specifically excluded applying AI in surveillance and weaponry. Unveiled in early February 2025, this is part of a broader trend where leading tech companies are collaborating more with the military and defense industries.

New AI Fundamentals

- When Google first came out with its AI principles in 2018, they specifically promised not to:

- develop technology that is inherently bad or could be bad.

- design weapons or other technologies with the primary objective of harming individuals or facilitating their harm directly.

- use technologies that violate widely accepted norms by collecting or processing information for surveillance.

- pursuing technological advancements that go against universally accepted international law and human rights standards.

These particular restrictions have been eliminated in the most recent update. Google AI leader Demis Hassabis said a review of these rules was necessary due to the changing geopolitical situation. Hassabis and Senior Vice President of Technology and Society James Manyika referenced the rapid advancement of AI since 2018 in a company blog, citing that it has progressed from being a niche area of study to becoming an omnipresent technology that is central to most applications. They believed that AI should be placed under the service of national security interests and that democracies should take the lead in its development, but under principles of equality, freedom, and respect for human rights.

The political environment’s influence

The political environment within the United States has significantly changed during the same time when this policy change. President Donald Trump’s administration withdrew former President Joe Biden’s executive order encouraging the safe, secure, and resilient development of AI. Technology companies have been pushed to adjust their policies to conform to the new administration’s directives as a result of this withdrawal. Google CEO Sundar Pichai clarified that the firm’s decision to refresh its AI principles was driven by adherence to new federal guidelines. Public and Employee Reaction the public at large and Google itself are sharply polarized about the action.

Executives explained the policy changes, including the canceling of a few diversity, equity, and inclusion (DEI) initiatives, in an all-staff meeting. Chief Legal Officer Kent Walker maintained that there is a necessity to review AI principles amid changing geopolitical situations, while former diversity head Melonie Parker discussed ending particular DEI training sessions. Notwithstanding these reasons, some employees also raised concerns over the ethical grounds of creating AI for military application and possibly diluting the original principles of the company. More General Industry Trends Google’s change in policy represents a broader Silicon Valley trend by which technology firms are interacting with the military and defense sectors more and more.

The moral lines in the sand concerning AI uses were drawn some seven years ago when Alphabet faced strong internal resistance to participating in a Pentagon drone deal.

But considering today’s geopolitics, where tensions are ratcheted higher and AI improvements are made in rapid succession, such stances must be revised. Also, organizations like Meta and Anthropic have started to make military applications of AI possible by becoming involved with defense projects even further.

Implications for AI Ethics and Governance

Where there are no longer clear-cut prohibitions on the use of AI for weaponry and surveillance, there are genuine questions regarding the ethical governance of AI technologies. British computer scientist Stuart Russell has already spoken about the dangers of autonomous weapons systems and demanded international control structures to prevent misuse. The combination of AI with military applications under inadequate ethical rules may have unintended consequences, such as the intensification of conflicts or even the infringement on civil liberties with AI-powered surveillance.

Strategic and Financial Implications

Strategically, technology companies can gain lucrative contracts and the potential to influence national security policies by assisting defense initiatives.Next year, Alphabet will invest $75 billion in capital projects, primarily for creating its AI and infrastructure. The huge investment is evidence that the company wants to work on AI technologies, maybe with the defense sector.

In summary

Google’s decision to update its AI principles and eliminate outright prohibitions on the development of surveillance and weapons technology has also caused a seismic shift in the company’s moral trajectory. The action is part of a larger trend across the industry of greater collaboration between the defense and tech industries being spurred by shifting geopolitical realities and political pressures. These alliances also raise basic questions of ethics on the role of AI in society and the implications of its introduction to military power, even as there may be strategic and budgetary benefits. Industry, governments, and civil society must engage in regular dialogue as AI develops to make certain that its design and application maintain widely accepted standards of morality and human rights teaching.